Manifesto

On ethics, compliance, and ivory towers.Ethaas's self-image. And where it derives from.

Axiom 01

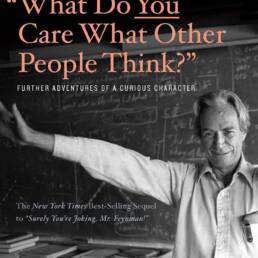

The Feynman approach: Go deep, even if it's 100% alien.

Good ethics assessments don’t begin with ethics. They begin with understanding. I delve into topics I was previously unfamiliar with – systematically. Quickly, but not superficially.

I can do this because my background is perfectly suited to it: I studied philosophy, art history, and musicology. I hold an MBA in innovation management and futures studies. I have over ten years of B2B marketing experience in niche fields ranging from hotel revenue management to financial scenario simulations. I also hold certificates in AI in Healthcare (Stanford), NIST AI Risk Management, Design Thinking, and Consumer Neuroscience.

This keeps the mind sharp and is not entirely accidental. Rather, it’s because different ways of thinking, technical languages, and operational realities have to come together if one wants to quickly and truly understand things in unfamiliar contexts.

Because: A sound ethics assessment cannot merely scratch the surface. How does the product work? Where exactly do the regulations apply? What happens at the operational level – not at some meta-level? Just as success is often determined by operational details, EU compliance is determined where the rules actually have an effect.

Ergo:

What I don’t know, I learn – then I judge.

Understanding is not a prerequisite for efficiency. But it is for accuracy.

Superficial assessments are not assessments at all. They are token documents.

Axiom 02

Ethics is not a theory. But it's also not ivory tower babble.

It’s written a thousand times – in online articles, consultant presentations, and dubious position papers: ethics is a “theory.” That’s not true. And it’s no small matter that it’s not true.

Ethics lacks the central defining characteristic of theorizing: it cannot classify phenomena according to objectively recognizable regularities and, based on this, make predictions about future events. That is simply not its job.

But ethics is not a practice in the direct sense either. It is applied – not practiced. And it doesn’t come from an ivory tower: ethics allows us to build systems that help us evaluate good, bad – and yes, even foolish – actions.

Let’s not kid ourselves: Ethics doesn’t provide a user manual for every specific situation. With it, we build frameworks – general principles, standards, perhaps even preferential rules for typical problem situations.

Sometimes things are ‘meant nicely’ … but their result is bullshit. And sometimes things are completely bonkers even though the result is actually pretty good.

Sounds abstract? It isn’t. EU compliance means translating the negotiation processes between ethics, research, business, society, and law – based on decades of democratic practice – into corporate reality in such a way that regulations are not only adhered to but also provide guidance – and, ideally, reveal new courses of action.

This isn’t about theory. This is about responsible practice. About guidance for real decisions.

● Examples

Ethics and Compliance

Good examples ahead: How ethics & compliance can lead to better processes and products:

EU AI Act

AI-supported applicant selection

SaaS HR

Where it starts: The company uses a machine learning model that automatically evaluates CVs and selects candidates for initial interviews. According to the EU AI Act classification, the system falls under high-risk AI (Annex III, Employment).

Ethical challenge: Systematic bias towards applicants cannot be completely ruled out (for example, regarding gaps in the CV, ethnicity based on names, etc.).

Where it goes: The reporting recommends implementing a review process (e.g., requiring human review of all rejections (human-in-the-loop principle)). Furthermore, explainability documentation (SHAP values) and a conformity assessment should be integrated into the process. Creating a separate internal AI Compliance Officer role appears ineffective; however, responsibility for AI compliance should be assigned to a role within the company.

DSGVO & Datenschutz

Satellitenbild-Analytik

GeoTech / SaaS

Where it starts: The company analyzes high-resolution satellite images for clients in insurance, agriculture and urban planning – at this resolution, individual people and vehicles become recognizable.

Ethical challenge: Mass surveillance through the backdoor: Insurance customers came up with the idea of commissioning analyses for behavior pattern recognition on private properties.

Where it goes: The report recommends developing an internal use-case policy: automatic blurring of all individuals within 50 meters. The privacy-by-design principle could be integrated directly into the API layer – personal data features are removed server-side before the data reaches the customer. Further recommendation: Make DPIA mandatory for every new contractual partner (→ GDPR Art. 25 fulfilled, DPIA process).

Medizinethik

KI-Diagnoseassistenz

MedTech

Where it starts: The company is developing an AI system that evaluates MRI scans and detects early signs of certain neurological diseases – with high sensitivity, and before clinical symptoms appear.

Ethical challenge: Predictive diagnoses without clinical confirmation: Patients receive probability scores for a disease for which there is no treatment. This raises questions about informed consent, psychological distress, and insurance discrimination.

Where it goes: The Ethaas recommendation is insufficient in this case – we lack the capacity for reviewing innovations in the medical field. A multi-stage informed consent process is nevertheless suggested. Furthermore, results should only be communicated to treating physicians, never directly to patients. And: A dedicated and qualified medical ethics committee should be involved as a permanent body.

Maschinensicherheit

Kollaborative Robotik

Industrial Tech

Where it started: The company sells robotic arms (especially for SMEs). The systems operate without a safety cage alongside human employees – classified according to Machinery Directive 2006/42/EC.

Ethical challenge: Small businesses configured the robots themselves and deactivated safety stop functions to increase cycle times. This led to several near misses.

Where it went: The report recommended several technical and ethical measures: locking safety parameters against overwriting via firmware, introducing an onboarding certificate for operators, and possibly setting up an incident reporting system for employees of customer companies.